Introduction

A/B testing is an important method of deriving customer preferences and lets marketing creatives avoid guesswork when it comes to design, interface, and copy direction. It doesn’t matter if ads are yellow or blue, or whether the website is minimalist or maximalist. Through A/B testing, all of these choices will be tested at once and customers will provide a clear answer as to which they prefer.

In fact, the importance of A/B testing cannot be understated. Consistently conducting A/B tests is a necessity, not just a “once in a while” practice. It is a window into the preferences of a company’s customer base.

To better explain the benefits of A/B testing, first let’s examine what A/B testing actually is.

What is A/B testing?

A/B testing, also known as split testing, is a method of comparing two versions of the same digital marketing assets against each other to test which out of the two performs better. A/B testing can be used for websites, Facebook Ads, emails, and more!

What’s so great about this test is that it enables marketing strategists to understand what works and what doesn’t.

How does an A/B test work?

To understand how A/B testing works, let’s take a look at the example below.

Kush’s goal is to get more newsletter sign-ups on a website page. The current web design (in this case, to be referred to as “the controlled”) is performing decently, but he wants to test out if his new landing page (to be referred to as “the variant”) could get more leads. Instead of jumping to conclusions on which landing page would perform better, Kush decides to run an A/B test.

First he publishes Website A (the original) and waits a week to collect results. Then he publishes Website B (the variation) and waits another week to collect results. Once both of the results are collected, their performance metrics can be compared to decide which website will gain traction better.

However, keep in mind that whenever an A/B test is performed, only one element at a time should be tested or else it becomes difficult to determine what qualifies as a successful test. For instance, it is easy to know what causes Banner A to perform better than Banner B if the only thing being tested is the ad copy. On the other hand, if the variations made include different copy, imagery, and format - it will not make a good basis for 1:1 comparison as it is almost an entirely different page altogether.

Benefits of A/B testing

Now that A/B testing is clearly defined, let’s dive into the benefits of A/B testing and how it can impact marketing strategy.

The phrase ‘Content is King’ still holds true. No one wants to click on something unrelatable. Content specialists always aim to produce content that provides value to the audience. A/B testing gives content writers the ability to filter out and improve ineffective content based on actual customer feedback rather than their own speculation. When content is relevant and useful to the audience, over time it will build credibility and trust for the brand.

b. Reduce bounce rate

Bounce rates are a company’s worst enemy. Bounce rate is the percentage of people who visit a site but then leave quickly after. The higher the bounce rate, the less time they are spending getting to know the brand they clicked on. It’s terrible for brands because there is little chance they will follow a call-to-action or buy a product or service from the brand. A/B testing allows users to to test different versions of the same landing page to determine which gets a higher engagement rate, ultimately leading to a lower bounce rate.

c. Increase conversion rate

If ad performance is an issue, A/B testing is a solid way to try and improve it. If the ad has a bold headline or important subtext, but the ad manager is unsure if it’s effective, they can create a variation that includes a different headline or subtext and compare metrics accordingly.

d. Easy to analyze

In order to achieve something, you first need to know what you want to achieve. Setting a goal before getting started with anything is key to a successful campaign. It sets a clearer path to lay out a marketing strategy whether it’s copywriting, running a test, or building a landing page. Similarly, during A/B testing, it’s quite clear what metric should be measured such as impressions, engagement, and conversation rates. For instance, if the goal was to achieve more engagement through your Facebook ad — clicks, impressions, and post engagement are the metrics to look for when the report is ready. And whichever ad creative with the highest goal value, that’s the new ad creative direction that should be prioritised in the future.

e. Quick results

Another perk of A/B testing is that it can give you quick results on what is working and what is not. Even if you’re testing on a relatively small sample size, an A/B test is able to provide actionable results that can help form quick and accurate decisions – allowing marketing teams to focus on other priorities.

f. Multi-functional

There are no bad ideas when it comes to A/B testing as it is not biased and does not have a ‘preference’. No one is determining whether an idea works or doesn’t work, as those conclusions will be based on the marketer’s decisions based on collected data. Not to mention that there are so many parameters that can potentially be tested – from themes and colours to fonts and imagery and more.

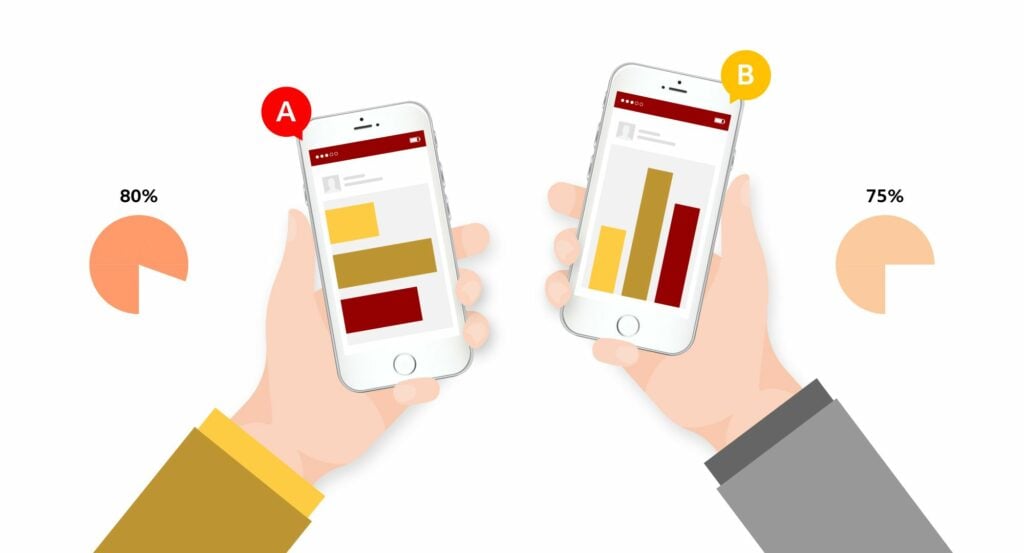

Besides testing the ad or website content, another type of A/B test is audience testing. Show the same ad or website version (anything that you want to present) to two different audiences. For instance, Group A consists of people between age 18-25 whereas Group B consists of people age 26-30. This is one way you can understand what appeals more to the audience you want to target. Once the test is over, the results may show that one one group performs better compared to the other. Perhaps they show more engagement or there’s more conversion from a particular group. Whatever the goal is, the group that helps you achieve it is the one that you want to target.

g. Reduce risks

Even if you’re planning to conduct a very well thought out change in any of your marketing creatives, there’s still a chance that you might be sabotaging your own ad campaign, email send-outs, or even traffic on your website. This is because you can’t determine what your viewers like or dislike. As a result, this can affect sales if the audience can’t adapt, relate, or simply do not like the variant version of the creative material. However, A/B testing helps determine what works and what doesn’t, which reduces decision making time and gives creatives confidence in the end result.

Set up your A/B test in 10 steps

There are 3 stages of an A/B test, which are, before, during, and after. Let us quickly go through each stage so you can start testing your ads!

Before your A/B test

1. Identify the goal

Before running a test, clearly define the intention of the test. What’s the purpose of this test? Is it to look at conversion rates, impressions, etc. A clearly defined goal will help set guidelines for a success or unsuccessful test.

2. Pick one variable to test

While it’s great to be excited to test all sorts of variables, it’s best practice to test a single variable at a time. Otherwise, it will be hard to understand the exact element that causes a change in performance.

3. Create two versions of the ad (Control and Variation)

Before running the test, create two versions of the ad. Essentially these two ads are similar except for the variant being tested.

See the example below:

4. Determine the audience

Testing between different audiences ensures you have a wider view of how your content is being received. It would be smart to pick an audience that has previous interactions with the brand. This is because there is already evidence that this audience will respond to an ad from the brand. However, this step is of course not applicable if using the A/B test to find the best performing audience.

5. Decide how significant results need to be

Before starting the test it is important to establish how significant results need to be to justify choosing one ad over another. For instance, if you have only made a very small change (e.g. changed the colour of a button) they may not produce radically different results. If colour A gets 15 conversions, while colour B gets 17 conversions, will this be enough of a difference to determine a winner? To determine how significant your result should be you have to look at statistical significance. Results are considered “significant” when they are unlikely to have occurred by chance. Achieving statistical significance with a 95% confidence level means that results will only occur by chance once in every 20 times. Calculate your statistical significance by using this calculator.

During your A/B test

6. A/B testing tool

Creating an A/B test requires an A/B testing tool. Both Google and Facebook have their own A/B testing tool where results from ads can be measured.

7. Test your ads at the same time

To limit out any outside factors that can change results, it is important to test ads at the exact same time. By doing that, it is much less likely that differences in results are due to other reasons.

8. Make sure the test runs for a sufficient amount of time

Based on how detailed you want statistics to be, short, medium, and long timeframes can be set for tests.This ensures there is ample data available to analyse. For instance, if running an A/B test on a website, the test depends on how many visitors it receives per day. If there’s an average of 100 people that are visiting the website per day and the goal is to have a minimum of 500 sign-ups, then the duration of the test depends on how quickly these visitors sign-up. For example, if 100 people sign-up each day, that would mean that the test duration would be approximately 5 days. There are different ways to calculate it, but one way could be to use a calculator.

After your A/B test

9. Analyse your results

Although there are multiple metrics to look at in an A/B test report. It’s important to only focus on those that make sense to the goal. For instance, if the goal is to achieve a high conversion rate, then it’s a no brainer to look at CVR (conversion rate). On the other hand, if increasing engagement is the main issue, then impressions and clicks are the appropriate metrics. Remember, focus on goals!

10. Next steps

Once a winning marketing asset has been found, it helps you plan for the future. However, keep in mind that there is always room for improvement so keep testing the content!

Conclusion

Remember, A/B testing is one of the many powerful ways to collect information from customers. It helps marketers make better decisions and also helps provide more relevant content to viewers. Keep in mind that performing a single A/B test is typically insufficient. Consistently performing these tests and optimising content based on the data is the right way to go.

Good luck!

If you’re interested and ready to improve your creative performance through A/B testing, you can drop us a note at hello@admiral.digital. We’re more than excited to help your brand grow!